Nominally Hedged: 2.13.2026 | Your AI Is Solving the Wrong Error

Three cost buckets, two kinds of mistakes, and the $15 million post-mortem nobody writes.

There is a version of every business decision where you act when you shouldn’t, and a version where you don’t act when you should. The first one is visible. It shows up in write-downs, failed pilots, investor calls where the CFO explains what happened. The second one is invisible. It shows up in the margins you never captured, the contracts you overpaid, the cost structure that quietly compounded against you while you were busy optimizing things that didn’t move the needle.

The visible mistake gets all the attention. A drilling optimization tool that blows up a motor. A completions design that underdelivers on EUR. An AI model that tells you to pump faster and the well screens out. These are loud. Expensive. Career-defining in the wrong direction. The post-mortem gets a slide deck. Someone presents it at the next ops review. Lessons learned. Process updated. Controls tightened. I’ve been part of many!

The invisible mistake is worse, and nobody writes a post-mortem for it, because nobody knows it happened. You paid $0.50 per gallon too much on surfactant for eighteen months because nobody modeled the feedstock spread. You renewed a compression contract at rates 15% above where the market had moved because the Ops team didn’t have visibility into vendor utilization. You let an escalation clause compound for three years because the original negotiation happened before you had data on basin-level pricing dynamics. These aren’t dramatic. They don’t show up in a single quarter. They just bleed. Slowly. Across every line item on the LOE statement and AFE, every month, compounding into millions that never make it to the distribution line.

Most of the AI conversation in oil and gas is focused on avoiding the visible mistake. How do I make sure this model doesn’t break something? How do I validate the output before it touches an $8 million well decision? Those are real concerns. But they are concerns about acting on something that turns out to be wrong.

The question nobody is asking is: what am I missing by not acting? What margin am I leaving on the table because I don’t have the information to act on it? What is the cost of not knowing what my contracts should look like relative to market fundamentals?

That asymmetry is the whole game. And it’s where the real moat lives.

A week ago I argued that enterprise AI in oil and gas is mostly a Larry David carpool lane scheme. You pay a vendor to sit in the passenger seat, keep the same headcount, add a license fee to the G&A line, and call it transformation. The car costs more and moves no faster. The real path is convergence: collapse the SME and the builder into a single node, build the automation internally, reduce headcount with a clear ROI, and keep the G&A trend moving in the direction the last decade established. No vendor. No license fee. Fewer people in the car.

That gets you a first-mover advantage. But first-mover advantages in oil and gas have a shelf life. Once someone proves a workflow automation works, everyone copies it. The 530-to-750 wells-per-engineer ratio becomes table stakes the way the simul-frac became table stakes. The real competitive moat comes from something different: a differentiated view that nobody else has, embedded in a tool that repeats it at scale.

I promised three buckets. Here they are.

The Three Buckets

The industry is focused on maximizing cash flow. If you accept that premise, and at this point there is no alternative premise, then every AI application has to connect to one of three levers:

Reduce Capital Intensity. Think of this as maintenance capital. How do I inject the minimum amount of capital required to meet my relatively flat production profile? Two sides: drilling and completion efficiency, and contracting those services.

Increase Topline Production. Better recovery per well, improved production uptime, artificial lift optimization, completions design that actually moves EUR.

Boost Bottom Line by Cutting LOE. Reduce the money it costs to produce the well. Chemicals, compression, water handling, workover frequency. Every dollar off the LOE line goes straight to free cash flow.

Simple enough framework. Three buckets. But the buckets are not created equal, and the reason they’re not has everything to do with the structure of the errors inside them.

Buckets 1 and 2: The Service Provider Problem

Reducing cycle time and increasing production are the categories that get the most airtime at conferences. Drill faster. Frac smarter. Optimize your ESP settings with machine learning. Design completions with AI. These are exciting. They are also, for an E&P building in-house, structurally disadvantaged.

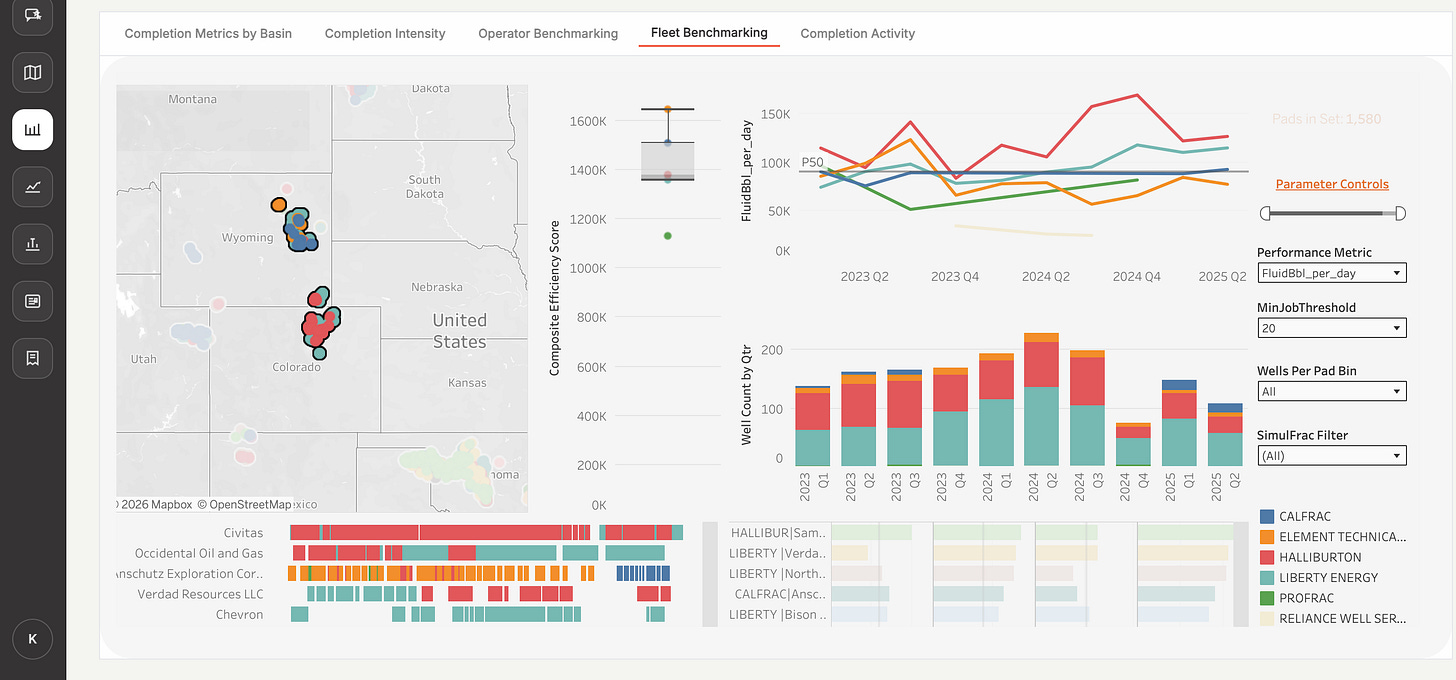

Oil and gas relies on outside service providers for most of the physical work that drives Buckets 1 and 2. The drilling contractor runs the rig. The pressure pumper executes the frac. The ESP manufacturer designs and installs the lift system. These companies don’t just have more data than you. They have better data. A model trained across 30 operators and 4,000 wells sees more geological variation, more equipment configurations, more failure modes, and more edge cases than your internal dataset ever will. Your 40-well model doesn’t just lose on volume. It loses on statistical robustness. Diversity of input reduces overfitting, and that is a structural advantage you cannot replicate internally. And because service providers develop the tools, they disseminate quickly to your competition. Your neighbor gets the same algorithm next quarter.

This is the relativity problem I’ve written about before. You don’t move relatively. And relativity is the only thing that matters when you’re trying to build a competitive moat. If everyone gets the same drilling optimization tool from the same service provider, nobody has an edge. You have spent money and effort to arrive at the same place as your peers. The carpool lane, but everyone has a passenger now.

But the second problem is more important than the service provider data advantage. It’s the structure of the risk.

The Two-Sided Bet

Using AI to improve drilling times, completion cycle times, completions design, or ESP run settings is an $8 million decision per well where the errors cut in both directions.

If the model tells you to drill more aggressively and you blow out a motor, that’s the visible mistake. You acted on something that turned out to be wrong. AFE over budget. Post-mortem. Slide deck at the ops review. If the model says a completions design will boost EUR by 15% and it delivers 5%, you just allocated capital to a design that underperformed. Expensive. Measurable. Everyone knows about it.

But if the model could have told you something useful and you didn’t trust it, you left barrels in the ground. That’s the invisible mistake. The well that could have been 15% better but wasn’t, because you drilled the conservative design. Nobody writes that post-mortem because nobody knows the counterfactual existed.

Both errors are expensive. Both are hard to detect in real time. Both impact capital decisions where the feedback loop is measured in months, not minutes. And critically, both have to be managed simultaneously. You can’t just optimize for one without exposing yourself to the other.

This is a two-sided bet. And two-sided bets are hard to build AI around, because the tolerance for error on the downside constrains how aggressive you can be on the upside. The risk management overhead is enormous. The validation requirements are extreme. And the ROI case is muddy because you’re always weighing the cost of the mistake you might make against the value of the improvement you might capture.

That’s Buckets 1 and 2. Important. Real. But structurally difficult for in-house AI to win on, and structurally likely to get commoditized by service providers who have the data advantage.

Bucket 3: The One-Tailed Bet

Now contrast that with AI that helps optimize your cost structure through automated market monitoring, bid optimization, and contracting intelligence.

Here, the error structure is fundamentally different. And that difference is the entire strategic argument for where AI creates a defensible moat rather than a replicable efficiency.

If my model overestimates my buying power, if it tells me conditions are more favorable than they actually are and I bid too aggressively, the decision arc is self-correcting. I submit an aggressive bid. The vendor says no. I adjust the model or explore another option. The cost of being wrong in this direction is a failed bid and a phone call. Feedback is immediate, binary, and cheap. This is also, by the way, one of the reasons I keep beating the drum about disintermediating the middle man in procurement. Every intermediary between you and a “no” from the vendor is a delay in the feedback loop that makes your model smarter.

The mistake that should keep you up at night is the other one. The invisible one. Where you think your position is weaker than it actually is and overpay relative to market fundamentals and your unique buying power. There is no vendor calling you to say, “Hey, you could have gotten this 12% cheaper.” There is no natural correction mechanism. The overpayment just compounds. Quarter after quarter. Across chemicals, compression, water hauling, workover rigs, every line item on the LOE statement.

And here’s the critical distinction that makes this a strategically different AI problem from Buckets 1 and 2: you have to buy this stuff from someone. Production chemicals, compression services, water hauling. These are not optional. The question is never whether to spend the money. It’s whether you’re spending the right amount. The only error that actually costs you money is the one where you leave margin on the table. It’s a one-tailed bet.

When you only need to worry about error in one direction, you can build systems that are far more aggressive, far more precise, and far more deployable. You don’t need the model to be perfect. You need it to be directionally correct on the side that costs you money to be wrong. The other side corrects itself on contact with the market.

One-Tailed Does Not Mean Simple

I want to be careful here because the argument so far might sound like Bucket 3 is the easy button. It is not. The error structure is favorable. The moat dynamics are favorable. But the actual work of building the intelligence layer is enormous, and for reasons that are specific to how oil and gas operates.

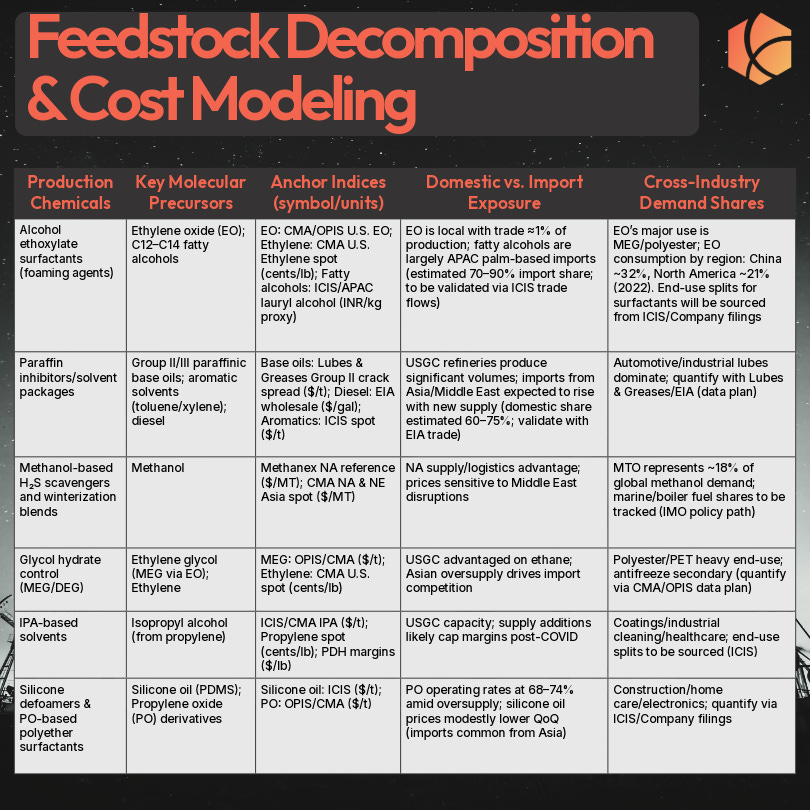

The first reason is the service-based nature of the business itself. Roughly 80% of the CAPEX and OPEX budget flows through service-based categories. That means what you are actually trying to optimize is Total Cost of Ownership, not just price. And TCO is a fundamentally harder problem. It depends on uptime performance, staffing quality, equipment condition, contract primary term, infrastructure proximity, employee competency. The vendor quoting you $5,000 per month less on compression might cost you more in aggregate if their uptime is 95% instead of 97%. Apples-to-apples comparison across vendors requires performance benchmarking data, operational reliability data, and contract structure data that most operators do not systematically collect. You have to go find unique data sources that allow you to quantify this in a way that makes the comparison objective rather than anecdotal. That is a lot of work before the model even starts learning.

The second reason is what I think of as counting cards. You have to completely assess the deck. A BATNA framework helps here, but deploying it requires cataloging and tracking every potential counterparty across every line item on your AFE and OPEX budget. That alone is a substantial data exercise. But it gets harder. Because the counterparty’s BATNA is other oil and gas companies, you also need a data-driven approach to assessing every operator they could sell that service to instead of you. Who else in the basin needs compression? Who else is buying methanol at volume? Where does your vendor’s next best customer sit relative to you on reliability, volume, and payment terms? And a large portion of both the vendor universe and the operator universe is private, which means you need a novel approach to assessing companies that don’t file 10-Ks or host earnings calls. This is not trivial. This is building an intelligence map of an entire market structure, most of which does not want to be mapped.

The third reason is the fundamental cost decomposition. To know whether a price is fair, you have to understand what goes into it. What are the raw components and feedstocks? What is a reasonable proxy for that market? What other industries besides oil and gas consume those inputs, and how are those end markets performing? Where are the logistical hubs and what are freight dynamics doing? For production chemicals, this means tracking natural gas to methanol spreads, MTO run rates in China, Gulf Coast utilization, hazmat freight premiums into Appalachia. For compression, it means understanding manufacturing lead times, steel and engine costs, fleet age demographics, and the vendor’s cost of capital. This is a full decomposition of the supply chain for every major cost category. And by the way, this analysis fits naturally with the direct sourcing and insourcing evaluation. If you understand the cost structure deeply enough to negotiate intelligently, you also understand it deeply enough to evaluate whether you should be sourcing directly or bringing the capability in-house. The synergies between the intelligence layer and the strategic sourcing decision are real.

So yes, this is a lot. Three interlocking data problems, each one substantial on its own.

But here is where the one-tailed nature of the bet rescues you again. I do not need to solve any of these problems in the absolute. I need to solve them in the relative. I do not need to know exactly what my buying power is. I need to know how it fits in the relative pecking order. I do not need to know exactly what my leverage is with Vendor A. I just need to know that it is greater than my leverage with Vendor B and Vendor C, so I can sequence my negotiations correctly and construct my bids with the right level of aggression for each counterparty.

Relative accuracy is a dramatically lower bar than absolute accuracy. And in a world where most operators have zero systematic intelligence on any of these dimensions, even a directionally correct model puts you in a fundamentally different negotiating position than your peers.

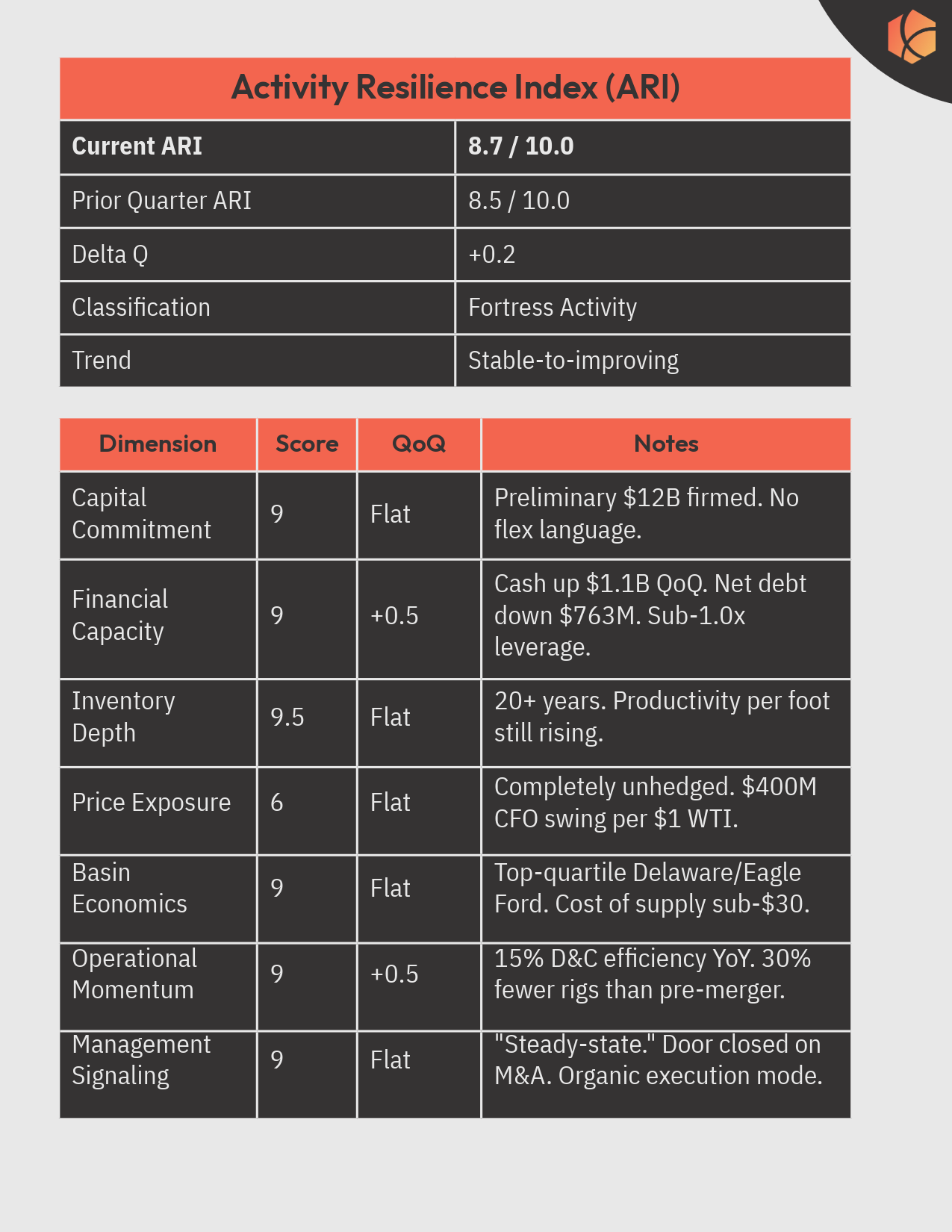

To be clear, I am biased here. This is what Kalibr does. This is why we have spent a significant amount of time studying these markets, building Kalibr Pulse, and developing the algorithms that help on these cost centers. But I think the logic is founded independently of who builds the tools. And I am largely putting my money where my mouth is.

Why This Is the Moat

In Buckets 1 and 2, your drilling data is roughly as good as your neighbor’s. Your type curves look the same. Your service providers are iterating on the same models for everyone. The service provider has a structural data advantage and a structural incentive to spread the gains across their customer base. Anything you build gets commoditized.

Bucket 3 is the opposite. Your cost structure is proprietary. Your vendor relationships are specific to your acreage position, your basin, your volume commitments, your contract history. Your buying power is a function of your scale, your operational rhythm, your pad complexity, your credit quality. None of that is replicable by your neighbor because none of it is the same as your neighbor’s. And unlike drilling or completions, where wider data dispersion across operators makes a model better, in contracting it makes it worse. What compression should cost you given your volume, your vendor history, and your basin dynamics is not improved by knowing what someone in the Haynesville paid. The “bias” in your procurement data isn’t bias. It’s the signal.

The data that feeds an AI contracting model is inherently proprietary: your invoices, your contract terms, your bid history, your vendor performance data, overlaid on market intelligence about feedstock costs, utilization rates, freight differentials, and vendor financial health. This is the kind of data that I wrote about in the Kalibr Sweep last October when we broke down methanol economics. Know what it costs the producer to make the molecule, what it costs you not to buy it, and how both numbers move with feedstock, utilization, and freight. The side with better information captures the margin.

The companies that build this capability will have structurally lower LOE that their competitors cannot explain and cannot replicate. Because you can copy someone’s frac design. You cannot copy their intelligence on what compression should cost them in the Delaware Basin in Q3.

What’s Next

So we have the strategic logic. G&A convergence gets you lean. Bucket 3 contracting intelligence gets you a moat. The error structure is asymmetric and favorable. The data is proprietary. The complexity is real but tractable because you only need relative accuracy, not absolute.

What we have not done yet is the financial case. Over the last two weeks, we have covered two distinct AI applications: the G&A convergence play from Part I and the contracting intelligence play from today. Next week, I want to put both of those into a financial framework. How do you differentiate an organization from a fundraising perspective when it employs AI across both of these dimensions? What does the cash flow impact actually look like when you model G&A reduction alongside structurally lower LOE? And how does that story land with the capital providers, whether PE sponsors, family offices, or public market investors, who are deciding which teams get backed and which ones don’t?

Then we will decompose an actual cost center and walk through building one of these tools. Not the theory. The data inputs, the architecture, and the product. Because the invisible mistakes are not going to find themselves.